When Algorithms Decide Who Recovers: The UnitedHealth nH Predict Case

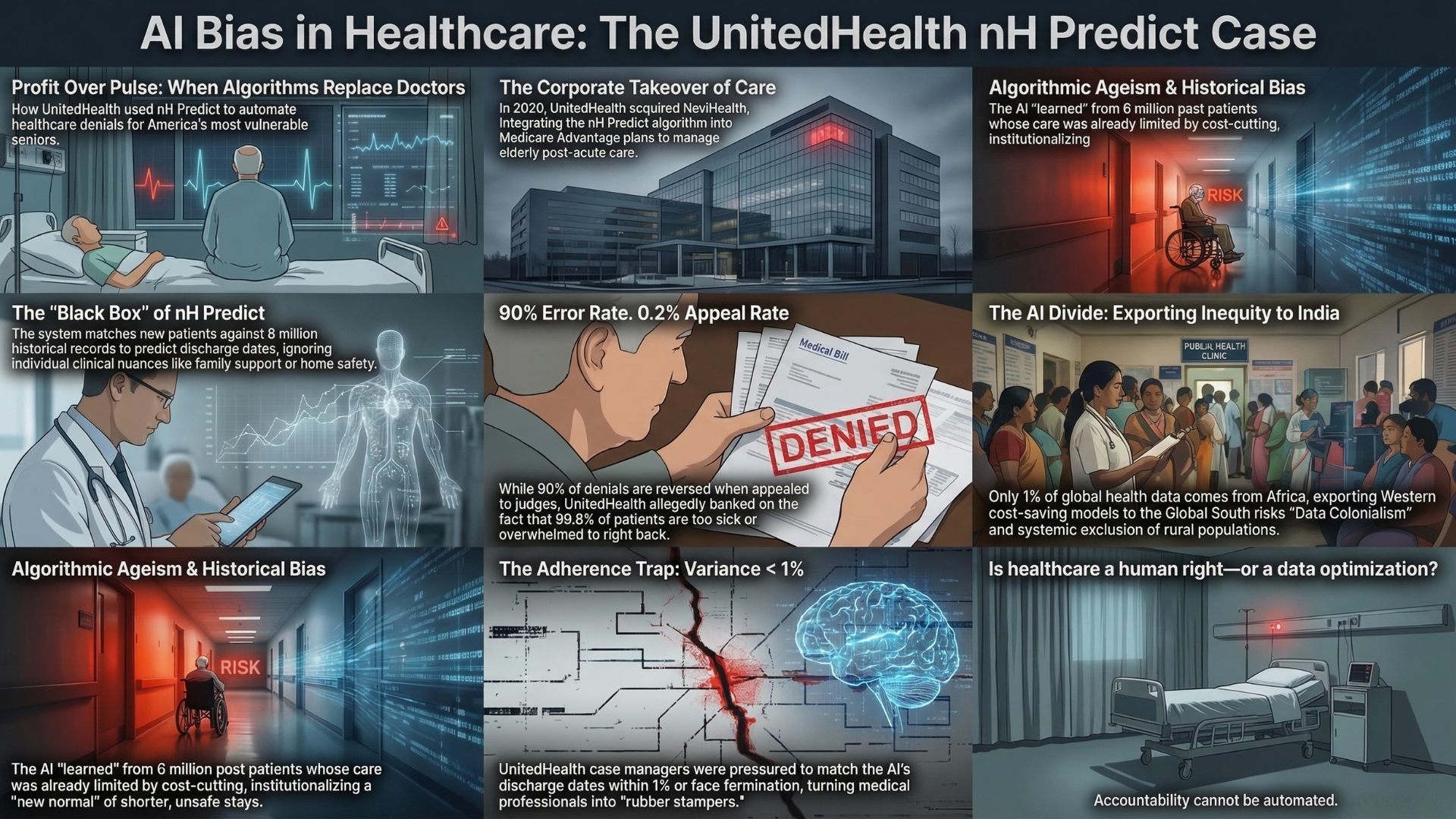

In 2023, a lawsuit revealed how UnitedHealth used an AI system to determine when elderly patients should stop receiving care. The nH Predict case highlights how cost-driven algorithms can override clinical judgment and introduce systemic bias in healthcare decisions. This case raises critical questions for policymakers especially in the Global South about the risks of scaling AI without adequate oversight.

AI Fairness 101 - Real-World Incidents

Part 6 of 7

Table of Contents

- 🎥 Explained: When AI Decides Care - The UnitedHealth nH Predict Elderly Bias Case

- AI Fairness 101 - Real Incident (2023)

- UnitedHealth nH Predict: When Recovery Timelines Become Coverage Cutoffs

- References

- 📥 AI Fairness 101 — Real-World Incidents: United Health nh-predict algorithm bias in healthcare against Elderly patients Deck (PDF)

- Related in this cluster

- 🔎 Explore the AI Fairness 101 Series

- 💬 Join the Conversation

- 🌍 Follow GlobalSouth.AI

🎥 Explained: When AI Decides Care - The UnitedHealth nH Predict Elderly Bias Case

AI Fairness 101 - Real Incident (2023)

UnitedHealth nH Predict: When Recovery Timelines Become Coverage Cutoffs

⚠️ Key Takeaway

The nH Predict system estimated how long post-acute care should last, but it was allegedly used as a practical cutoff for payment. When an insurer’s efficiency target overrides patient-specific clinical judgment, elderly patients bear the risk.

- 🏥 System used: NaviHealth's nH Predict for post-acute care duration estimates

- 👵 Most affected group: elderly Medicare Advantage patients needing rehab or nursing-home care

- ⚠️ Core allegation: predicted discharge dates became de facto payment cutoffs

- 📉 Appeal pattern: denials were reportedly overturned at very high rates when challenged

1. What Happened 🏥

UnitedHealth Group’s Medicare Advantage plans relied on nH Predict - a predictive system developed by NaviHealth - to estimate how long elderly patients should remain in nursing-home or rehabilitation care. The tool uses large volumes of historical medical records to match a new patient with “similar” past cases and predict how many days of post-acute care the patient is expected to need [1].

Staff accounts and reporting suggest that these predictions were not treated as rough planning guidance. Instead, employees alleged that management pushed teams to follow the algorithm’s discharge dates closely, using them as a practical limit on how long rehab costs would be covered [2][3]. Patients and clinicians similarly observed that the date generated by nH Predict often coincided with the date UnitedHealth stopped paying [4].

The result was a pattern of premature discharge decisions and coverage denials. Elderly patients recovering from surgery were told they were ready to go home even when they still could not walk safely, climb stairs, or manage wound care independently [5]. Many families were then forced into a difficult choice: appeal the denial, pay out of pocket, or cut care short.

In November 2023, families of two deceased beneficiaries filed a class-action lawsuit alleging that the algorithm had an error rate of roughly 90 percent and was knowingly used to deny medically necessary coverage [6][7]. By early 2025, a federal court allowed key breach-of-contract and good-faith claims to proceed, noting that UnitedHealth’s policy documents described decisions being made by clinical staff and physicians rather than by automated systems [8].

2. Impact ⚠️

The consequences were both immediate for patients and broader for the healthcare system.

- 💸 Financial harm: some patients were left with bills exceeding $10,000 after coverage was cut off before recovery was complete [9].

- 🩺 Clinical harm: denials reportedly persisted even when treating physicians believed continued care was necessary [10].

- 📉 Low expected resistance: UnitedHealth reportedly expected only about 0.2 percent of policyholders to appeal denials, which made the strategy easier to sustain at scale [11].

- 📊 Systemic error signal: the lawsuit alleged about a 90 percent error rate, while administrative judges reportedly overturned nearly 90 percent of appealed denials [12][13].

- ⚖️ Policy pressure: the case helped intensify debate over whether Medicare Advantage plans should face tighter rules for algorithm-assisted coverage decisions [14].

The core pattern is difficult to ignore: the patients least able to navigate appeals were also the ones most exposed to harm from an aggressive automated workflow.

3. Lifecycle Failure 🧭

The nH Predict case reflects a failure across the AI lifecycle, not just a narrow model-performance issue.

- 🧩 Problem formulation: the operational goal appears to have centered on shortening length of stay, a proxy closely tied to cost control, rather than patient recovery and safe discharge [15].

- 🗂️ Data and modeling: the system learned from historical cases and claims patterns. If earlier decisions already reflected cost containment pressures or bias against older patients, the model would reproduce those patterns [1].

- 🎯 Metric choice: length of stay is a weak proxy for actual readiness to leave care. It does not fully capture complications, frailty, coexisting conditions, or whether a patient has support at home.

- 🏥 Deployment: the model was allegedly used as a hard operational benchmark rather than as advisory support, which reduced the practical ability of clinicians to override it [2][3].

- 🔍 Monitoring and recourse: persistently high appeal overturn rates should have triggered stronger review, but the system appears to have remained in use despite repeated signals that the output was misaligned with clinical need [16].

An AI system can be statistically sophisticated and still fail if institutions use it to enforce the wrong objective.

4. Bias Types 🧪

Several kinds of bias appear to have interacted here:

- 👵 Age bias: elderly patients were the main group exposed to the system’s discharge recommendations and denial patterns [2].

- 🎯 Outcome-metric bias: the workflow prioritized efficiency and shorter stays instead of individualized recovery.

- 🕰️ Historical bias: past claims and care decisions may already have reflected unequal or cost-driven treatment norms.

- 🤖 Automation bias: staff reportedly deferred to the algorithm even when medical judgment pointed in another direction [17].

Together, these forms of bias created a system that could look efficient on paper while producing unfair outcomes in practice.

5. Global South Lens 🌍

Although this case emerged from the US Medicare Advantage system, the governance lesson travels easily.

Many countries in the Global South are adopting digital systems to manage insurance claims, hospital workflows, and public health administration. In those settings, algorithmic tools may arrive faster than the oversight systems needed to evaluate them.

That creates three compounding risks:

- 🏛️ Weak accountability: regulators may have limited capacity to audit opaque vendor systems.

- 💰 High financial exposure: where out-of-pocket spending is high, a wrongful denial can immediately become a household crisis.

- 👴 Higher vulnerability: older patients often face mobility, literacy, and appeals barriers that make bad automated decisions harder to contest.

The policy implication is straightforward: imported healthcare AI should not be trusted simply because it worked inside another insurance market. It has to be validated against local demographics, care access patterns, and institutional safeguards.

6. Bigger Picture 🔎

The nH Predict controversy illustrates a broader warning about AI in healthcare and social systems.

High-stakes systems often fail not because the software is random, but because organizations quietly redefine care, risk, or eligibility in ways that serve operational efficiency first. Once that logic is embedded into a model and wrapped in technical language, it becomes harder for patients and frontline staff to challenge.

Three lessons follow:

- 👨⚕️ Clinical judgment cannot be ceremonial. Human review has to be empowered to meaningfully override algorithmic outputs.

- 🪟 Transparency matters. Patients need a clear explanation of how algorithm-assisted decisions are made and how to contest them.

- 🛠️ Fairness must start upstream. Governance has to address objectives, incentives, validation, and monitoring before deployment, not only after harm appears.

Some scholars have argued that algorithmic coverage tools should be treated more like medical devices, with stronger validation and regulatory scrutiny before they shape care decisions [18].

References

- Elizabeth Napolitano, CBS News (Nov. 20, 2023)

- KFF Health News (Oct. 5, 2023)

- Casey Ross & Bob Herman, STAT Investigation (Nov. 14, 2023)

- Josephine A. Phillips, The Regulatory Review (Mar. 18, 2025)

- DLA Piper (Feb. 24, 2025)

📥 AI Fairness 101 — Real-World Incidents: United Health nh-predict algorithm bias in healthcare against Elderly patients Deck (PDF)

Related in this cluster

- When an Algorithm Broke Thousands of Families: The Netherlands Child Welfare Scandal

- Access Denied: How India’s Digital ‘Cure-All’ Became a Real-World Fairness Crisis

- The Golden Touch of Ruin: How Michigan’s MiDAS Algorithm Falsely Accused 40,000 People of Fraud

- The COMPAS Algorithm Scandal: When AI Decides Who Goes to Jail

- The Optum Healthcare Algorithm Bias Against Black Patients (2019)

- The Algorithmic Gender Bias — Lessons from the Amazon AI Hiring Failure

- Browse all AI Fairness posts

🔎 Explore the AI Fairness 101 Series

This post is part of the AI Fairness 101 - Real-World Incidents learning track.

Stay tuned - new posts every week.

💬 Join the Conversation

Have thoughts, experiences, or questions about AI fairness? Share your comments, discuss with global experts, and connect with the community:

👉 Reach out via the Contact page

📧 Write to us: support@globalsouth.ai

🌍 Follow GlobalSouth.AI

Stay connected and join the conversation on AI governance, fairness, safety, and sustainability.

- LinkedIn: https://linkedin.com/company/globalsouthai

- Substack Newsletter: https://newsletter.globalsouth.ai/

Subscribe to stay updated on new case studies, frameworks, and Global South perspectives on responsible AI.

Related Posts

The Algorithmic Gender Bias — Lessons from the Amazon AI Hiring Failure

Amazon built an AI to find the best candidates. It ended up filtering out women. Amazon’s hiring tool is a clear example of how gender bias can be embedded and amplified through algorithms. In the Global South, the risks are even higher.

The COMPAS Algorithm Scandal: When AI Decides Who Goes to Jail ⚖️

As AI enters courts and welfare systems worldwide, the COMPAS debate reveals a critical lesson: fairness depends on context, and exporting models without reform risks scaling inequality.

When an Algorithm Broke Thousands of Families: The Netherlands Child Welfare Scandal

How a design-phase failure in the Dutch childcare fraud algorithm created one of the worst AI governance disasters in Europe — and what the Global South must learn from it.